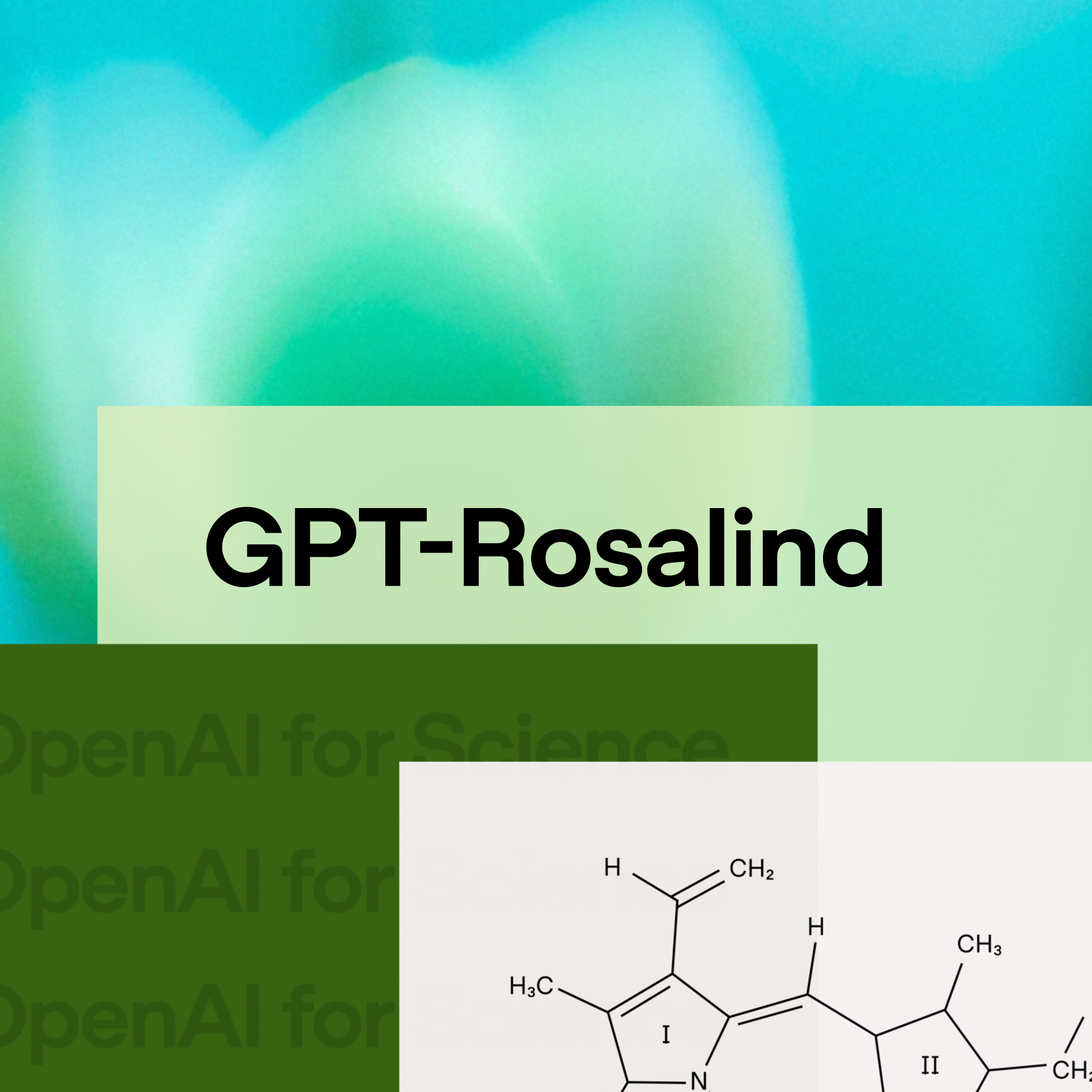

GPT-Rosalind (OpenAI): frontier reasoning model for biology, drug discovery, and translational medicine. 50+ scientific tools and databases via a new Life Sciences plugin for Codex. BixBench: 0.751, leading all models with published scores. Dyno Therapeutics RNA prediction: above 95th percentile of human experts.